I am happy to announce that this year, we got accepted another 3 papers (1 journal and 2 conference papers) which deal with robot self-calibration.

This long term project is running under great supervision of Mgr. Matej Hoffmann, Ph.D. (see his website) who focuses on how babies develop the sense of their bodies and space around it and how self-touch might contribute to it (GACR project, EXPRO project). This connects closely also to self-calibration of human who might learn about kinematics of their bodies via touching its individual parts.

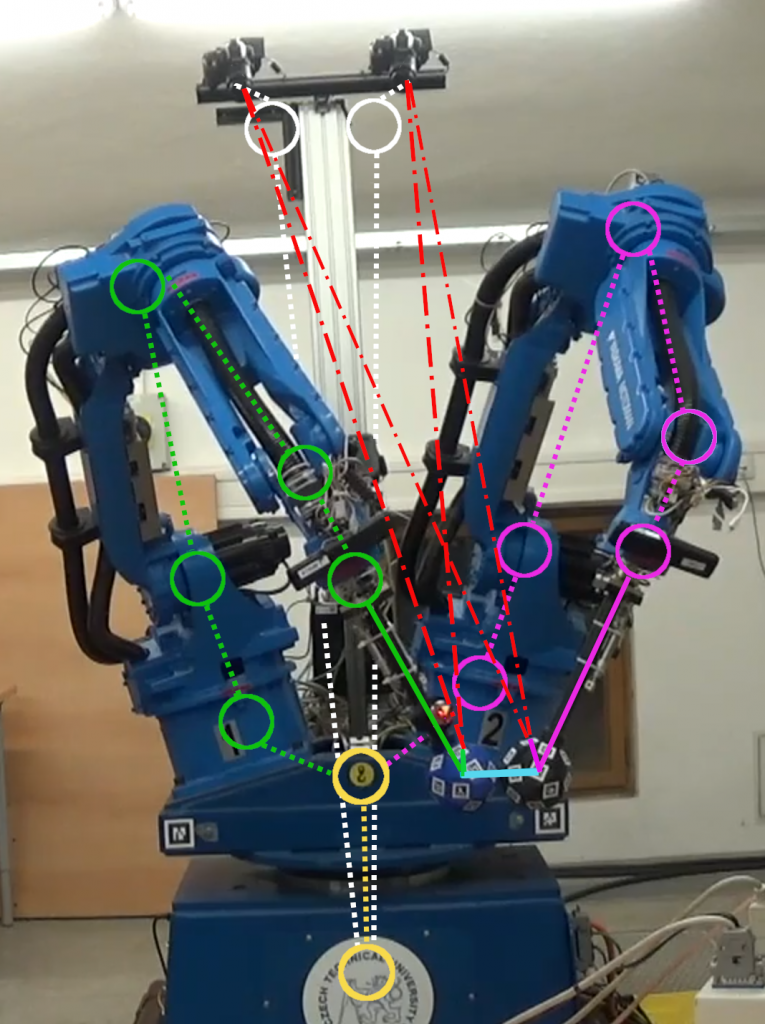

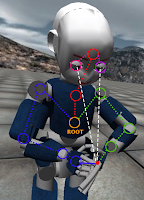

In our efforts we try to transfer these ideas to the robots and explore if the usage of this new sensorical modality might be useful for their calibration. We focus mainly on humanoid robots, but explore also the usefulness of these methods for the calibration of industrial robots.

Our long journey was this year completed by 3 papers – 2 conference papers at Humanoids conference and 1 journal paper in Robotics and Computer-Integrated Manufacturing (RCIM) journal.

Matej Hoffmann prepared a nice summary of the whole project on his website: Automatic robot self-calibration.

These papers nicely complement our former paper, where we on simulated iCub humanoid robot explored how using multiple kinematics chain created by self-touch might improve its calibration.